AI in Contingent Talent Rate benchmarking

Most contingent workforce programs do not have a systematic process for refreshing rate benchmarks. The rate card gets negotiated at contract renewal, which happens every one or two years, and drifts in between. Vendors know this. They price accordingly.

Eight Vendors. Same Role.

Nobody Knows Which Rate Is Defensible.

Your quarterly business review with Vendor D is in two days.

They have been billing $118 per hour for a Senior Data Engineer in Singapore for the past eight months. You have a feeling that is high. Your procurement lead has a feeling it is high. But neither of you has the data to say so with confidence.

So the conversation goes the way it always goes. They present their slides. You ask some questions. You agree to "review the rate card for next quarter." Nothing changes.

This is a data failure. And it is one of the most consistent problems in contingent workforce programs – not that teams lack leverage with vendors, but that they lack the specific numbers that make leverage real.

AI fixes this – not by negotiating for you but by giving you the table you should have walked in with.

Why Rate Discipline Is Hard

Rate cards have a shelf life. A rate card built in 2022 does not reflect the market in 2025. Roles evolve. Geographies shift. Supply and demand move. The market benchmark for a Senior Data Engineer in Singapore today is different from what it was eighteen months ago, and different again from what it is in Bangalore or Kuala Lumpur.

Most contingent workforce programs do not have a systematic process for refreshing rate benchmarks. The rate card gets negotiated at contract renewal, which happens every one or two years, and drifts in between. Vendors know this. They price accordingly.

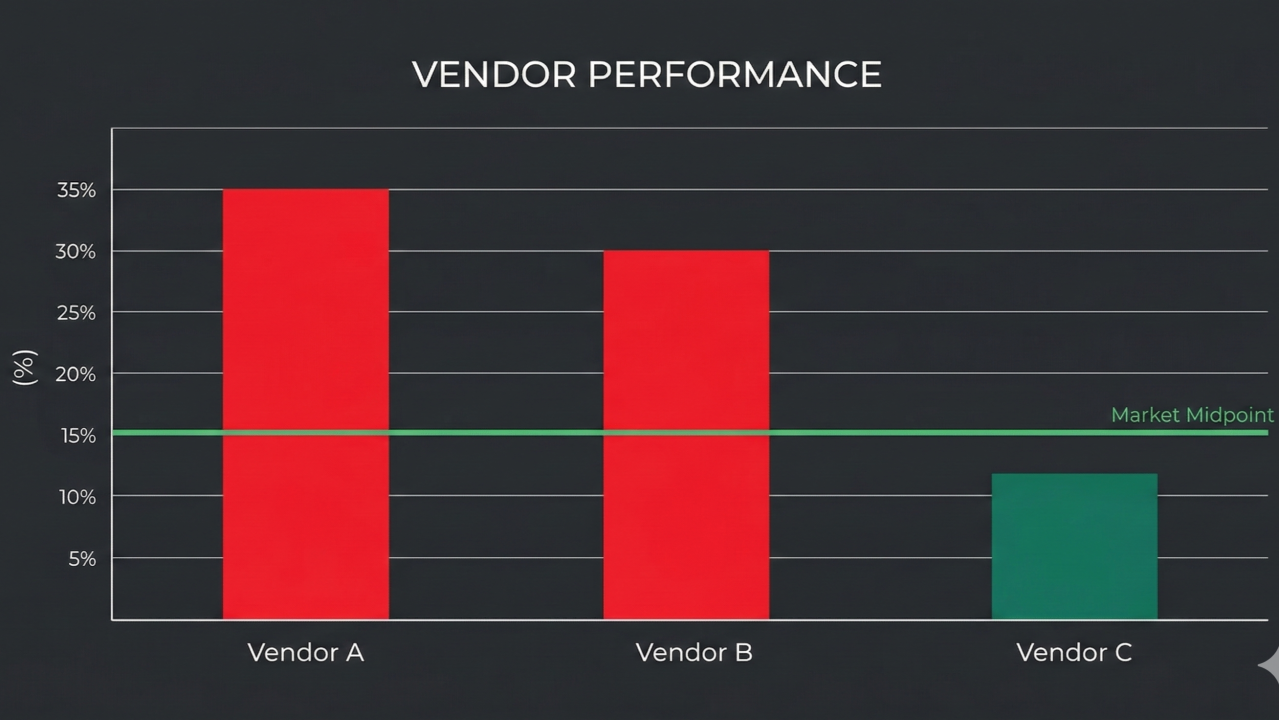

The second problem is fragmentation. Eight vendors billing different rates for functionally identical roles. Nobody has pulled all eight into a single view against a common benchmark. So each vendor relationship gets managed in isolation, and the variance across them is invisible until someone builds the table manually, which takes hours nobody has.

The third problem is that benchmark data exists but is scattered. Survey exports from Mercer or Radford. Published market data. Internal comp ranges. Rate submissions from previous tenders. All of it sits in different files and formats. Synthesising it into something usable requires work that keeps not getting done.

AI collapses that work from hours to minutes.

What AI Does Here

You are not asking Claude (or your co‑pilot) to negotiate or to make decisions. You are asking it to do the analytical work that should precede every vendor conversation – pulling data together, identifying outliers, and generating the talking points that turn a social call into an accountability conversation.

The tool for this is a workflow automation – specifically Gumloop – it's easy to learn and has a great agent to assist building the automation. You build a simple pipeline: your bill rate data goes in as a CSV export, Claude/copilot analyses it against benchmark data you provide, and a structured variance report comes out. This runs monthly, automatically, without anyone building a spreadsheet.

For a one‑off analysis you can do the same thing directly in Claude/Copilot – paste your data, paste your benchmark, ask for the comparison. The workflow just makes it repeatable without manual effort each time.

The Practical Walkthrough

What data you need

Two inputs. Your current bill rates by vendor, role, and geography – a simple export from your VMS or a spreadsheet your team maintains. And benchmark data for the same roles and geographies – a survey export, published market data, or even your own internal comp ranges as a proxy. Your AI agent can do a market study and build a report that you can use as well.

You do not need perfect data. Directionally accurate benchmark data is enough to identify the obvious outliers. The goal is not a precise statistical analysis. It is a defensible starting point for a commercial conversation.

What do you ask Claude/Copilot

📋 Sample prompt:

"Here are current bill rates from six vendors for Senior Data Engineer roles across Singapore and Bangalore. Here is market benchmark data showing P25, P50, and P75 rates for the same roles and locations. Identify any vendor rates sitting above P65. Calculate the variance from P50 for each. Rank vendors by degree of overpricing. For the top two outliers generate a short vendor talking point I can use in a QBR."

What comes back is a structured table – vendor, role, location, their rate, the P50 benchmark, the variance as a percentage, and a plain‑language talking point for the conversation.

What the output looks like

Vendor D – Senior Data Engineer – Singapore

Current bill rate: $118/hr | P50 benchmark: $94/hr

Variance: 25.5% above market

————————————————

Talking point:

"Your current rate for this role sits approximately 25% above the P50 benchmark for Singapore. We would like to discuss repositioning to a rate between $92 and $96 per hour for the next contract period, which aligns with current market. We are happy to share the benchmark data we are working from."

That is the conversation. You walk in with a number, a benchmark, and a specific ask. The vendor knows you have data. The dynamic is different before anyone speaks.

Running This as a Monthly Workflow in Gumloop

The one‑off analysis is useful. The monthly workflow is transformative.

You build a simple Gumloop pipeline: your VMS or tracking spreadsheet exports bill rate data automatically on a schedule, Claude runs the variance analysis against a stored benchmark dataset, and the output gets delivered to your inbox or a shared folder as a formatted report.

Every month you get a view of which vendors have drifted above market, which roles are most exposed, and which conversations need to happen before the next QBR cycle. You stop being reactive – discovering rate problems at renewal – and start being proactive, catching drift as it happens.

The setup takes a few hours. The ongoing effort is close to zero.

Beyond the Rate Conversation

Rate variance analysis is the entry point. Once you have the infrastructure in place – data flowing, Claude/Copilot doing the analysis – you can extend it in two directions.

Backwards: analyse historical rate trends by vendor to identify which suppliers have been consistently above market and by how much. This informs tier decisions and contract strategy, not just individual negotiations.

Forwards: model the cost impact of bringing all outlier rates to P50. If your top three vendors repriced to market for their most overbilled roles, what is the annual saving? That number is worth knowing before you sit down to negotiate, and before you present to Finance.

The CWM function that can answer that question with a number rather than a shrug is the function that gets taken seriously in budget conversations.

The Honest Caveat

Bill rate is one dimension of vendor value. A vendor billing $118/hr and consistently filling roles in ten days is a different commercial proposition from a vendor billing $95 and taking six weeks to fill. Rate analysis without fill rate and quality data tells an incomplete story.

Use the rate variance report as a prompt for the right conversation, not as a case for the cheapest vendor. The goal is defensible rates, not the lowest rates. Those are different things and conflating them leads to vendor relationships that look good on a spreadsheet and perform poorly in practice.

What This Means for the CWM Function

Vendor management is one of the clearest places where the CWM function can demonstrate commercial value. Every percentage point of rate reduction across a $20 million annual contingent spend is $200,000. That math is easy for Finance to follow.

The teams that have historically struggled to make this case are the ones walking into QBRs without data. AI makes the data available. The commercial conversation becomes possible. And the CWM function stops being the team that manages process and starts being the team that manages cost.

That is a different seat at the table.