Your SOW Is Costing You More Than the Vendor Is.

The SOW is the most underreviewed document in contingent workforce management. It looks like a contract. It is signed like a contract. But most SOWs in project-based contingent programs are written loosely enough to create the exact co-employment exposure they are supposed to prevent.

Your vendor submitted the SOW on a Friday afternoon.

Your procurement lead glanced at it. Looked standard. Approved it Monday morning.

Eighteen months later, your legal team is explaining what "ongoing support and assistance as required" means to a labour tribunal.

This is not an unusual story. It is a pattern. The SOW is the most underreviewed document in contingent workforce management. It looks like a contract. It is signed like a contract. But most SOWs in project‑based contingent programs are written loosely enough to create the exact co‑employment exposure they are supposed to prevent.

The good news is that the gaps are consistent. The same five problems appear across most SOWs I have reviewed. And AI loaded with your own policy documents can run a first‑pass review that catches all five before legal ever sees the document.

Why SOWs Fail

The SOW's job is to define a project relationship, not an employment one. It does this by anchoring the engagement to outcomes rather than time and direction. Defined deliverables. Milestone‑based payments. The vendor controls how the work gets done. The client defines what gets delivered.

When that logic breaks down – and it breaks down in predictable ways – the SOW stops protecting you and starts exposing you.

- Vague deliverables. "Ongoing support," "advisory services," "as needed assistance." These phrases describe employment, not a project. A regulator reading this sees a worker under direction, not a vendor delivering an outcome.

- No milestone-linked payments. If the SOW pays monthly against time spent rather than against deliverables completed, the payment structure undermines the project framing. It looks like a salary.

- Control language. Phrases like "must be available during business hours," "will attend all team meetings," "reports to the Head of Product." Each of these is a control indicator. Enough of them together and you have described an employee.

- Exclusivity clauses. A genuine vendor works with multiple clients. An exclusivity requirement – even an informal one – is an employment indicator. If your SOW prevents the vendor from working with others, that is a problem.

- Unclear IP ownership. Who owns the work product? If the SOW is silent on this or assigns ownership in a way inconsistent with a vendor relationship, you have both a legal and a commercial exposure.

Most SOWs have at least two of these. Many have four.

Where AI Fits

The traditional path is: vendor sends SOW, internal team reviews it, legal reviews it if it looks significant, everyone signs. Legal review costs time and money. Internal review is inconsistent – some procurement leads catch control language, others do not know what to look for.

AI shifts this upstream. Before the SOW goes to legal, before it goes to the business for sign‑off, Claude (Any LLM, your co‑pilot) reviews it against a defined checklist and returns a structured assessment. High‑risk clauses flagged. Recommended edits provided. Reasoning shown.

What makes this work is loading Claude with your own program documents as permanent context. Claude has a feature called Projects – a configured workspace where uploaded documents stay available across every conversation. You load your classification policy, your SOW standards, your jurisdiction‑specific guidelines for Singapore, India, the UK, wherever you operate. Every review then happens against your actual program rules, not generic AI knowledge.

Every SOW review then happens against your actual program rules, not generic AI knowledge.

The Practical Walkthrough

Setting up the Claude Project

You create a Project in Claude and upload three to four documents as context:

- Your contingent workforce classification policy. The section on SOW versus T&M versus IC is the critical part.

- Your SOW standards or template. If you have a preferred structure, load it. Claude will use it as the benchmark.

- Any jurisdiction‑specific guidance relevant to your program. MOM guidelines for Singapore. IR35 rules for the UK. DOLE guidelines if you operate in the Philippines.

This takes thirty minutes to set up once. Every subsequent SOW review draws on these documents automatically.

Running the review

You paste the SOW into the Project conversation and give Claude a clear instruction. Something like:

"Review this SOW against our classification policy and SOW standards. Flag any of the following: vague or open‑ended deliverables, time‑based rather than milestone‑based payment terms, language indicating employer control over how work is performed, exclusivity requirements, and unclear IP ownership. For each flag, explain the risk and suggest an edit. Output a structured assessment."

What comes back is not a legal opinion. It is a practitioner‑grade review – the same structured thinking a senior CWM lead would apply, applied consistently, in under ten minutes.

What the output looks like

Claude returns something like this:

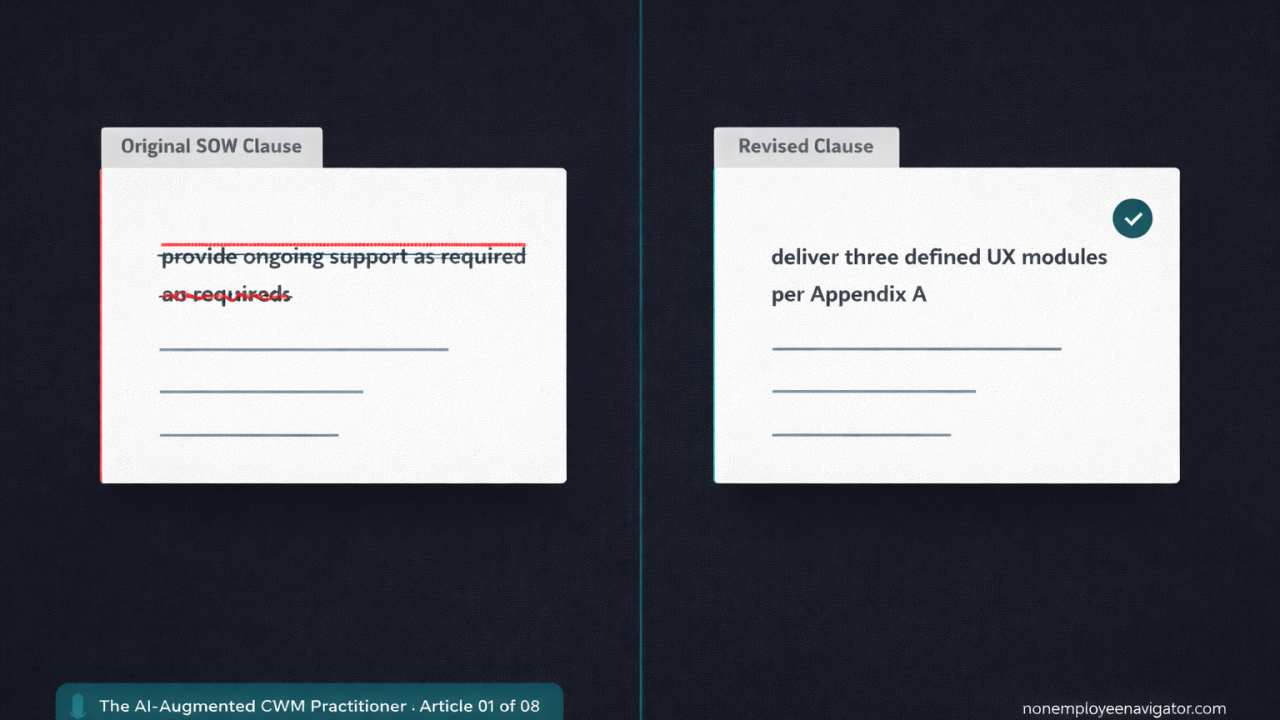

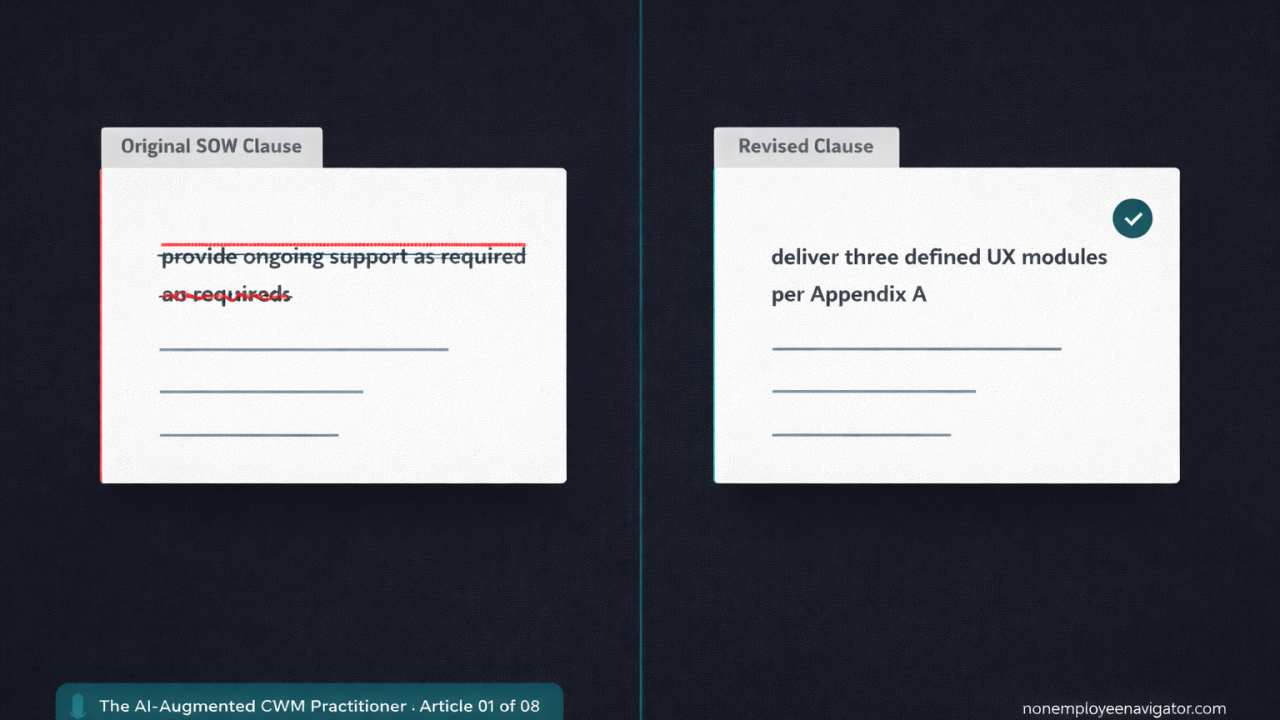

Flag 1 – Vague deliverables. Clause 2.1 reads "provide ongoing product design support as required." This language describes availability under direction rather than a defined project outcome. Recommended edit: replace with specific deliverables and acceptance criteria, for example "deliver finalised UX designs for three defined product modules, as specified in Appendix A."

Flag 2 – Control language. Clause 4.3 requires the vendor to "be present at the client's office every Tuesday and Thursday." Physical attendance requirements set by the client are a control indicator. Recommended edit: remove attendance requirement or reframe as vendor's discretion in managing delivery.

Flag 3 – Payment structure. Clause 5.1 sets monthly payment regardless of deliverable completion. This mirrors an employment payment pattern. Recommended edit: tie payment to milestone completion as defined in the project schedule.

Three flags. Clear reasoning. Specific edits. The procurement lead knows exactly what to send back to the vendor before anyone picks up the phone to legal.

What This Changes

The SOW review workflow in most programs is a bottleneck. Legal is busy. Reviews take days. Business pushes back on delays. The SOW goes through with known gaps because the cost of the delay feels higher than the cost of the risk.

AI removes the bottleneck. The first‑pass review is immediate and systematic. Legal only sees SOWs that have already been reviewed and cleaned up. Their time goes to the genuinely complex cases, not the obvious ones.

The CWM function stops being the team that slows things down. It becomes the team that moves things through cleanly.

The Honest Caveat

Claude reviews what is in the document. It does not know what is happening outside it.

A SOW might be technically clean while the actual engagement is functionally an employment relationship – the vendor is on‑site daily, takes direction from a manager, and has been working exclusively with your company for two years. The document passes. The practice fails.

AI‑assisted SOW review is a document control tool, not a program governance tool. You still need your engagement managers asking the right questions about how work is actually being performed. The document review is the floor, not the ceiling.

What This Means for the CWM Function

SOW quality is a proxy for program maturity. In programs with weak governance, SOWs drift. Language gets copied from old templates. Vendors push back on tight scopes. Business leaders want flexibility so they resist defined deliverables.

The CWM function that holds the line on SOW quality – consistently, with a documented review process – is the function that avoids the $200,000 legal dispute that nobody saw coming because the SOW said "as required."

AI makes that consistency achievable without hiring a dedicated contracts reviewer.